Years of running production systems give you something that's not in the code. You learn the real-world usage patterns, the failures that only show up under load, the degradation behaviour that creeps in over months. You learn which alerts actually matter and which are noise.

That knowledge is earned incrementally. Through building, observing, failing, and iterating. It lives in people, not in repositories.

I've been thinking about what happens to that knowledge when code generation speeds up by an order of magnitude.

Cognitive debt, briefly

The term cognitive debt was brought into software engineering by Margaret-Anne Storey earlier this year: the gap between what AI-generated code does and how well the developers actually understand it. Martin Fowler and Simon Willison have since amplified it, and it's gained serious traction. Five independent research groups converged on the same finding in a single week: AI agents generate code 5-7x faster than developers can comprehend it.

Storey followed up with a second post exploring the implications further. Anthropic's own research showed AI coding assistance reduces developer skill mastery by 17%. Developers who delegated code generation scored below 40% on comprehension tests.

I've written about this before from the code review angle and build fast learn slow, but there's a piece I haven't named until now.

Operational debt

Here's what I want to put a name to:

Operational debt is code generated faster than teams can earn the operational knowledge to run it reliably in production.

Cognitive debt is about understanding what the code does. Operational debt is about understanding what happens when it runs. They're related but distinct.

Operational knowledge is a specific thing. It's knowing the real-world usage patterns of your system. It's knowing which metrics actually correlate with user pain and which are just noise. It's understanding how the system degrades under pressure, not the clean failure modes you designed for, but the messy ones that emerge over time.

This knowledge grows through lived experience with a running system. You can't generate it. You can't shortcut it. You earn it by operating the system with real users doing unpredictable things, over months and years. Speed up code generation 5-7x and this knowledge doesn't keep pace. It can't.

The pattern that's hard to ignore

Check the Claude status page. Check GitHub's recent reliability track record. Both companies leaning heavily into AI-generated code. Both struggling with operational reliability.

I know, correlation isn't causation. There are many possible explanations: rapid growth, scaling challenges, organisational complexity. But the pattern is there. The companies most aggressively adopting AI for their own codebases are also the ones with the most visible reliability issues. It's worth asking why.

My hypothesis is that when you generate code faster than your team can build operational understanding of it, your ability to run that code reliably degrades. Not because the code is bad. Because nobody has had time to learn how it behaves in the wild.

How they compound

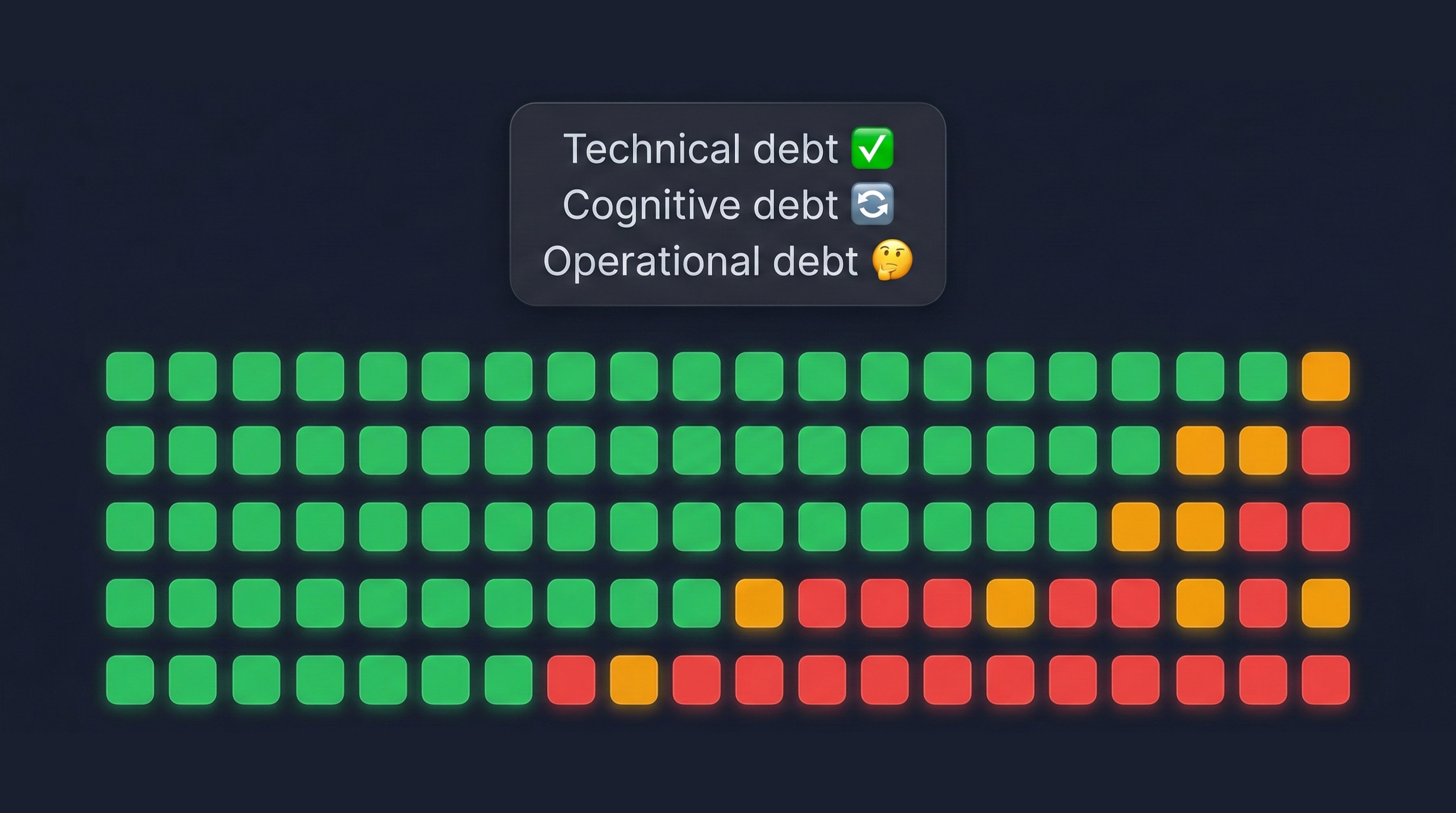

These problems compound:

- Cognitive debt: you can't understand the code fast enough

- Review bottleneck: you can't review it fast enough to maintain quality gates

- Operational debt: you can't earn production knowledge fast enough to run it reliably

Now put them together. Code you don't fully understand, that wasn't thoroughly reviewed, running in production environments you haven't had time to learn the operational characteristics of.

That's not a hypothetical. That's a reliability crisis happening right now.

What to do about it

I don't have a fully worked-out answer. But I think it starts with recognising that "how fast can we generate code" is the wrong metric. The right question is: "how well do we understand what we're running?"

The productivity gains are real. But productivity measured only in code output is measuring the wrong thing. I'm not saying we should slow down, but we shouldn't focus just on the speed of generation if our operational knowledge can't keep up.

Some things I think help:

- Match generation speed to learning speed. Give teams time to build operational understanding before the next wave of changes lands. Easier said than done when you need to keep up with the new pace of software development.

- Invest in observability before you invest in generation. If you can't see how your system behaves, generating more code just makes the blind spot bigger.

- Treat operational knowledge as a first-class asset. Document failure modes as you discover them. Run postmortems that capture institutional knowledge, not just action items. Make sure ops understand what changed recently.

- Be honest about the gap. If your team has generated more system than it can operate, that's a risk. Name it. Factor it into planning.

The goal isn't to slow down. It's to make sure understanding keeps up with generation. Augmented development is powerful precisely because it lets experienced practitioners move faster. But we need to keep up with the experience, not just move faster.

This is thinking in progress. If you're seeing this pattern in your own teams, or if you think I'm wrong about the connection, I'd genuinely like to hear about it. Drop me a line.